Is Your Data Safe with AI Tools? A Complete Privacy & Security Guide (2026)

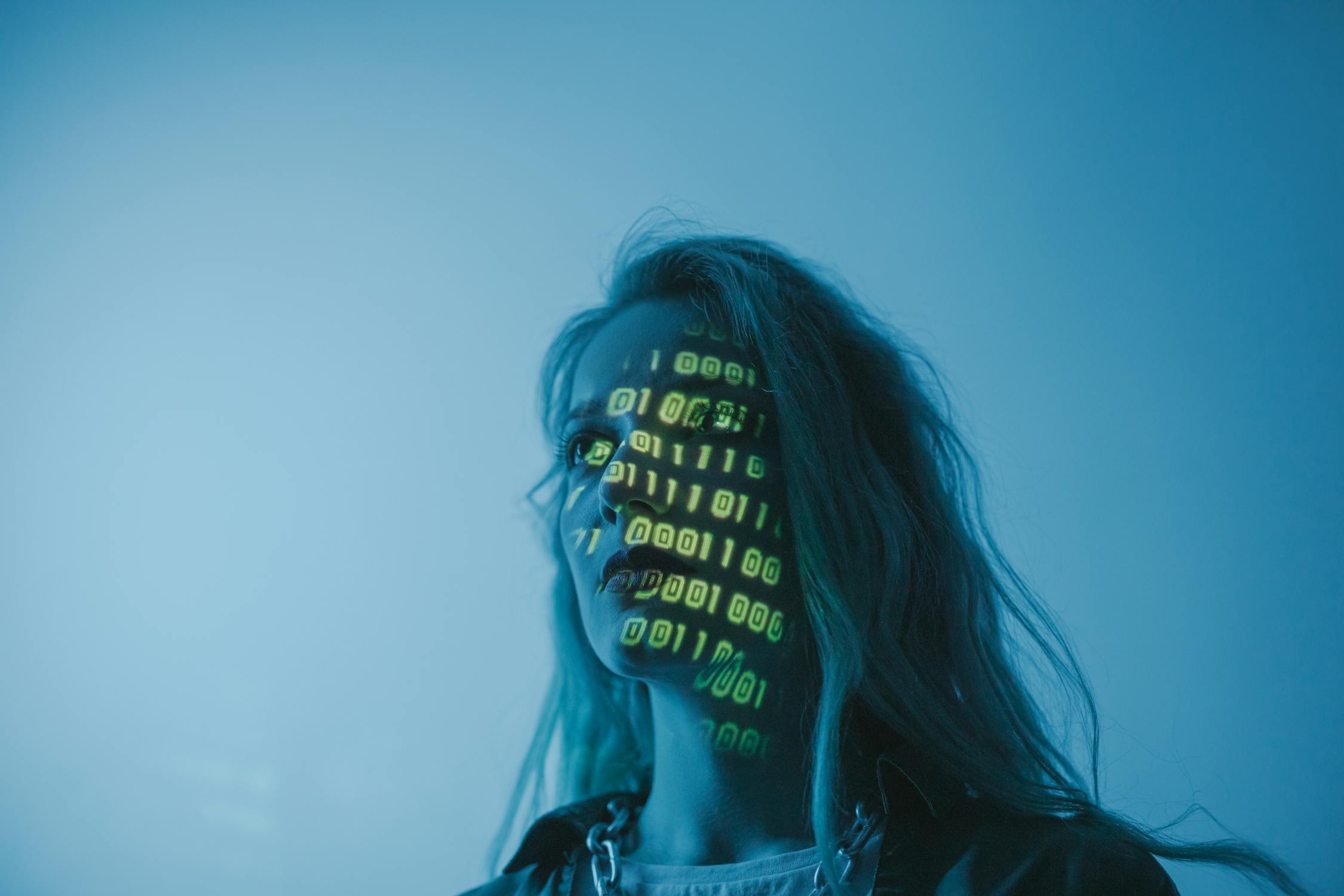

Artificial Intelligence tools have become an essential part of our daily digital lives in 2026. From AI chatbots like ChatGPT and Gemini to content writers, image generators, coding assistants, and productivity tools, millions of users rely on AI every day. But one critical question remains unanswered for most people: Is your data actually safe with AI tools? When you type prompts, upload files, share personal information, or connect accounts with AI platforms, you are handing over valuable data. Many users assume AI tools are secure simply because they are popular or backed by big companies. However, the reality is more complex. AI tools collect, process, store, and sometimes share user data in ways most people don’t fully understand. This detailed guide explains how AI tools handle your data, the real privacy risks involved, real-world incidents, and practical steps you can take to protect yourself in the age of artificial intelligence.

1. How AI Tools Collect Your Data

AI tools rely heavily on data to function effectively. Without data, AI systems cannot learn, improve, or deliver accurate results. Understanding what kind of data is collected is the first step toward protecting your privacy.

- User Inputs: Text prompts, voice commands, uploaded documents, images, and code snippets.

- Account Information: Email address, username, subscription details, and usage history.

- Behavioral Data: Time spent using the tool, interaction patterns, feature usage.

- Device & Technical Data: IP address, browser type, operating system, and device identifiers.

- Integrated Data: Information from connected services like Google Drive, calendars, or APIs.

Real-world example: When a freelancer uses an AI writing tool and uploads client briefs, the AI system processes that content. Even if the tool claims not to “store” data permanently, temporary processing still occurs on servers, creating potential exposure.

2. What Happens to Your Data Inside AI Systems?

Once your data enters an AI tool, it goes through multiple stages of processing. This process is rarely visible to the end user, which creates transparency issues.

- Processing: Your data is analyzed by machine learning models to generate responses.

- Temporary Storage: Data may be stored briefly for performance, logging, or security monitoring.

- Model Improvement: Some platforms use user data to improve AI accuracy (unless you opt out).

- Human Review: In certain cases, conversations may be reviewed by humans for safety or training.

Example: In earlier years, some AI chat platforms admitted that human reviewers could access anonymized conversations. Even anonymized data can sometimes be re-identified, especially when combined with other datasets.

3. Major Data Privacy Risks with AI Tools

While AI tools are powerful, they also introduce new and serious privacy risks that users often underestimate.

- Data Breaches: AI platforms store massive datasets, making them attractive targets for hackers.

- Data Misuse: Collected data may be used beyond the original purpose.

- Third-Party Sharing: Some AI tools share data with partners or service providers.

- Inference Risks: AI can infer sensitive details you never explicitly shared.

- Lack of Transparency: Privacy policies are often vague or difficult to understand.

Case study: In 2024, a popular AI productivity tool suffered a data exposure incident where user chat histories were briefly visible to other users. Although fixed quickly, it highlighted how even advanced AI companies are not immune to security flaws.

4. Are Free AI Tools More Dangerous Than Paid Ones?

A common belief is that free AI tools are riskier than paid versions. While not always true, free tools often rely more heavily on data monetization.

- Free tools may use your data for advertising or analytics.

- Paid tools are more likely to offer opt-out options for data usage.

- Enterprise plans usually provide stronger privacy guarantees.

Example: A free AI image generator might store prompts and images to improve its model, while a paid enterprise version may guarantee data isolation and deletion.

5. AI Tools and Legal Data Protection (GDPR & Beyond)

Governments worldwide have introduced regulations to protect user data from misuse by AI systems.

- GDPR: Gives users the right to access, delete, and control their data.

- CCPA: Allows users to opt out of data selling and request data deletion.

- EU AI Act: Regulates high-risk AI systems and mandates transparency.

However, regulations are only effective if companies comply and users actively exercise their rights.

6. How You Can Protect Your Data When Using AI Tools

You don’t need to stop using AI tools entirely. Instead, follow smart privacy practices to reduce risks.

- Avoid sharing sensitive personal or financial information.

- Disable data training options when available.

- Use separate accounts for work and personal AI usage.

- Read privacy settings, not just terms of service.

- Delete conversation history regularly.

Real-world tip: Many professionals now treat AI tools like public forums—never sharing anything they wouldn’t be comfortable seeing leaked.

7. The Future of AI Data Safety

The future of AI privacy depends on a balance between innovation and responsibility. Technologies like federated learning, on-device AI, and differential privacy are promising steps forward.

- More transparent AI policies

- Stronger global regulations

- Privacy-first AI business models

Users who stay informed and cautious will benefit the most from AI while minimizing risks.

Conclusion

So, is your data safe with AI tools? The honest answer is: it depends on how the tool is built and how you use it. AI tools are not inherently dangerous, but careless usage and weak privacy practices can expose sensitive information. By understanding how AI collects and processes data, recognizing real risks, and following smart protection strategies, you can safely benefit from AI without sacrificing your privacy. In 2026 and beyond, data awareness is no longer optional—it’s a digital survival skill.